There is a growing disconnect in the AI space. If you spend any time on YouTube or tech blogs, you are bombarded with “game-changing” agents and “revolutionary” workflows.

However, as someone who prioritizes local-first AI, I’ve noticed a frustrating trend: most of these reviews are essentially commercials for paid SaaS products. They distort reality by relying on massive cloud clusters that none of us are using in our home labs.

Even more baffling are the “local” demonstrators. I’ve seen reviewers with monster workstations—dual 4090s or massive A100s—running tiny, lightweight models for their demos. It’s the ultimate clickbait; they showcase a smooth experience that is functionally useless for those of us pushing the limits of what our hardware can actually handle via Ollama or LM Studio.

For me, the real journey happens in the friction

Take my current setup: a Mac Studio M4 Max (base model). It is a powerhouse, but it isn’t magic. When I run Hermes Agent, I feel the physical toll of the computation. Nothing heats up the Mac Studio chassis quite like Hermes. When the agent is working, the case becomes noticeably warm to the touch—a stark contrast to my other workflows.

For example, when I use VSCodium with the Continue extension, the experience is seamless. The machine might get lukewarm, but it never reaches that “thermal event” feel. For grammar corrections, spell-checking my blog posts, or light programming and web design, Continue is my go-to 9 out of 10 times. It is efficient, integrated, and respectful of the hardware.

Then there is the question of time. My recent attempt to have an agent rename a WordPress page was a humbling reminder: if a task can be done manually in three clicks, doing it manually is still the fastest option. The “agentic” dream is seductive, but the overhead of local execution can often outweigh the benefit for trivial tasks.

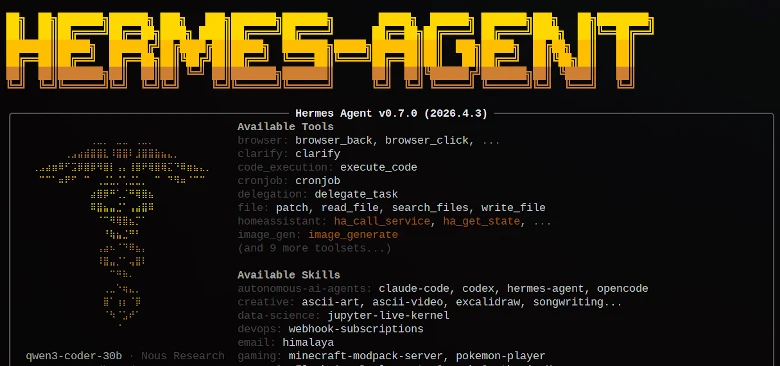

However, I haven’t abandoned Hermes Agent. There is a specific class of work where it is simply indispensable. When I need to perform deep research—tasks like “Go online, analyze this specific webpage, and synthesize a meta description,” or “Crawl this site and report every broken link”—Hermes is the only tool in my arsenal capable of the job. It handles the “heavy lifting” of web interaction and reasoning that a simple IDE extension cannot.

The heat, the latency, and the failures

I am an early adopter. I’ve watched the landscape shift violently in just three years, and I am still amazed by the progress. But I believe the community needs more honest reporting and fewer polished demos. We need to talk about the heat, the latency, and the failures.

Of course, in the world of local AI, a “fact” has a very short half-life. Everything I’ve written here might be obsolete in two or three months. That is the nature of the beast. But for now, I’ll keep my Mac Studio humming, my Linux box running the agent, and my eyes open to the difference between a YouTube thumbnail and a real-world terminal.

I also noticed those YouTubers pivot to a paid API and by doing so, they remove the very reason for using a local agent in the first place. As you said, there is absolutely no point in the overhead of setting up a Linux box and a Mac Studio if the “brain” is still living on a corporate server.