For the last few years, the AI world has been obsessed with “Benchmarks.” We see endless tables, percentages, and MMLU scores. But for those of us actually building things, those numbers are meaningless.

I don’t care if a model scores 2% higher on a logic test if it hallucinates my code or crawls at one token per second on my hardware. I care about the flow. I care about the “aha!” moment when the AI understands a complex request and delivers a clean, working solution.

After three years of iterating through almost every available tool, I believe I’ve finally found the “sweet spot” for my Mac Studio and gaming laptop.

The Evolution: From Cloud to Local

I was an early adopter. I saw the potential of local AI the moment I installed GPT4All nearly three years ago. Like many of you, I spent a long time in the “Cloud Era,” jumping between ChatGPT, Copilot, and Perplexity (which, in my experience, was consistently the smartest of the three for research).

As I started building more specialized apps—small, focused tools that did one thing perfectly—my needs changed. I began wanting to automate the sensitive parts of my life: money management, payments, and private data.

Suddenly, the “Cloud” was no longer practical. The idea of sending my financial logic to a third-party server was a non-starter. I needed the intelligence of a high-end LLM, but I needed it to live on my own silicon.

The Local Frontier: Qwen and the Shift to Ollama

Thankfully, we are living in a golden age of open-weights models. Giants like Alibaba (Qwen), Meta (Llama), and Mistral began releasing models that could actually code. For a long time, Qwen2.5-Coder-32B was my gold standard for the final polish on my software.

However, the experience of running these models is just as important as the models themselves. After experimenting with various loaders, I discovered Ollama.

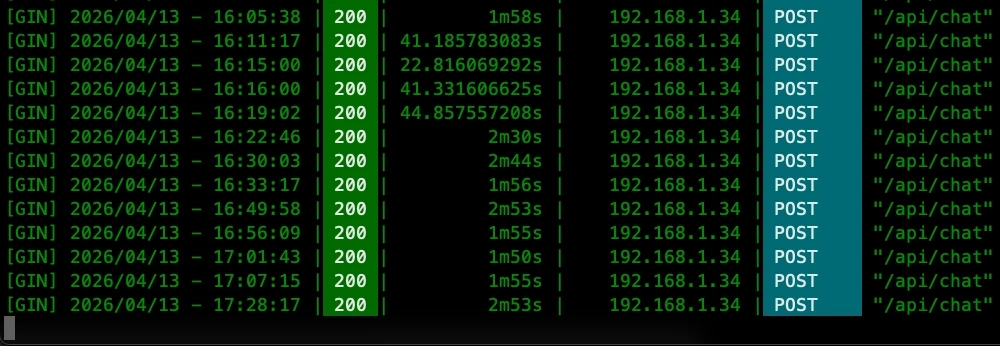

If you haven’t tried Ollama, it is, quite simply, the most satisfying way to run LLMs locally. It strips away the complexity and lets you deploy a model with a single command. It turned my home lab from a series of configuration headaches into a streamlined production line.

The New Favorite: Google’s Gemma 4

With Ollama running smoothly, I started testing the latest releases. That is when I encountered the Gemma 4 family from Google.

I’ll be honest: I didn’t expect to be this impressed. These days, roughly 80% of my work is powered by Gemma 4:31B.

Running this on my 2025 Mac Studio (M4 Max with 36GB of memory) is an absolute dream. The performance is fluid, the logic is sharp, and the output is remarkably concise. It doesn’t just “work”—it enables a level of productivity that I previously thought required a massive server farm.

For those searching for the right model, the Gemma 4 family is incredibly versatile. Depending on your RAM, you can run the smaller, lightning-fast variants or move up to the 31B version for deeper reasoning and complex coding tasks.

The Synergy: Gemma 4 and Hermes Agent

The real “magic” happened when I paired Gemma 4 with Hermes Agent.

Running autonomous agents locally is usually a resource-heavy nightmare. However, Gemma 4 seems to have a symbiotic relationship with Hermes. The agent runs faster, the responses are more coherent, and most importantly, it doesn’t stress the Mac as much as other models of similar size. It feels optimized, efficient, and—above all—stable.

Final Thoughts: Trust Your Gut, Not the Table

If you are currently staring at a leaderboard trying to figure out which LLM to download, my advice is this: Ignore the tables. Trust the experience.

The “best” LLM is the one that fits your hardware, respects your privacy, and understands your intent without a dozen prompts. For me, on the M4 Max, that is Gemma 4 running via Ollama.

Stop searching for the “perfect score” and start experimenting with the tools that actually move the needle for your projects.